The goal of our research is to collect and release feedback data that we and the broader AI research community can leverage over time. We also found that only 0.16% of BlenderBot’s responses to people were flagged as rude or inappropriate. It’s also twice as knowledgeable, while being factually incorrect 47% less often. Putting BlenderBot 3 to the TestĬompared with its predecessors, we found that BlenderBot 3 improved by 31% on conversational tasks. Over time, we will use this technique to make our models more responsible and safe for all users. We understand that not everyone who uses chatbots has good intentions, so we also developed new learning algorithm s to distinguish between helpful responses and harmful examples. Using this data, we can improve the model so that it doesn’t repeat its mistakes. When the chatbot’s response is unsatisfactory, we collect feedback on it. We trained BlenderBot 3 to learn from conversations to improve upon the skills people find most important - from talking about healthy recipes to finding child-friendly amenities in the city.

Many of the datasets used were collected by our own team, including one new dataset consisting of more than 20,000 conversations with people predicated on more than 1,000 topics of conversation. To improve BlenderBot 3’s ability to engage with people, we trained it with a large amount of publicly available language data.

You can also submit feedback in the chat itself.ĭeveloping a Safe Chatbot That Improves Itself

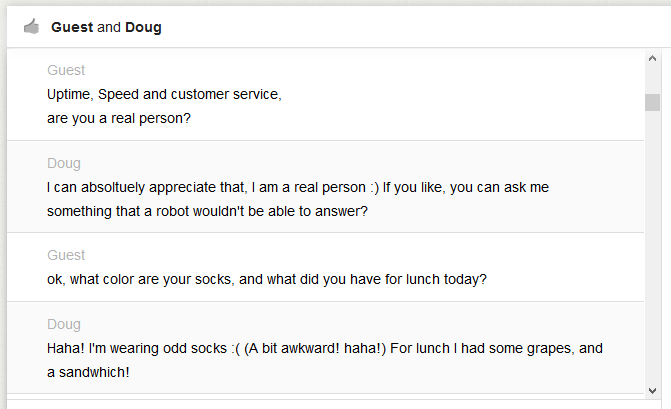

Choosing a thumbs-down lets you explain why you disliked the message - whether it was off-topic, nonsensical, rude, spam-like or something else. For example, you can react to each chat message in our BlenderBot 3 demo by clicking either the thumbs-up or thumbs-down icons. The Promise and Challenge of Chatting With HumansĪllowing an AI system to interact with people in the real world leads to longer, more diverse conversations, as well as more varied feedback. Despite this work, BlenderBot can still make rude or offensive comments, which is why we are collecting feedback that will help make future chatbots better. Since all conversational AI chatbots are known to sometimes mimic and generate unsafe, biased or offensive remarks, we’ve conducted large-scale studies, co-organized workshops and developed new techniques to create safeguards for BlenderBot 3. BlenderBot 3 inherits these skills and delivers superior performance because it’s built from Meta AI’s publicly available OPT-175B language model - approximately 58 times the size of BlenderBot 2. The BlenderBot series has made progress in combining conversational skills - like personality, empathy and knowledge - incorporating long-term memory, and searching the internet to carry out meaningful conversations. We will be sharing data from these interactions, and we’ve shared the BlenderBot 3 model and model cards with the scientific community to help advance research in conversational AI. Today, we’re releasing BlenderBot 3, our state-of-the-art conversational agent that can converse naturally with people, who can then provide feedback to the model on how to improve its responses. To build artificial intelligence (AI) systems that can interact with people in smarter, safer and more useful ways, we need to teach them to adapt to our needs.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed